Why Automating GP Document Collection Is Harder Than It Looks

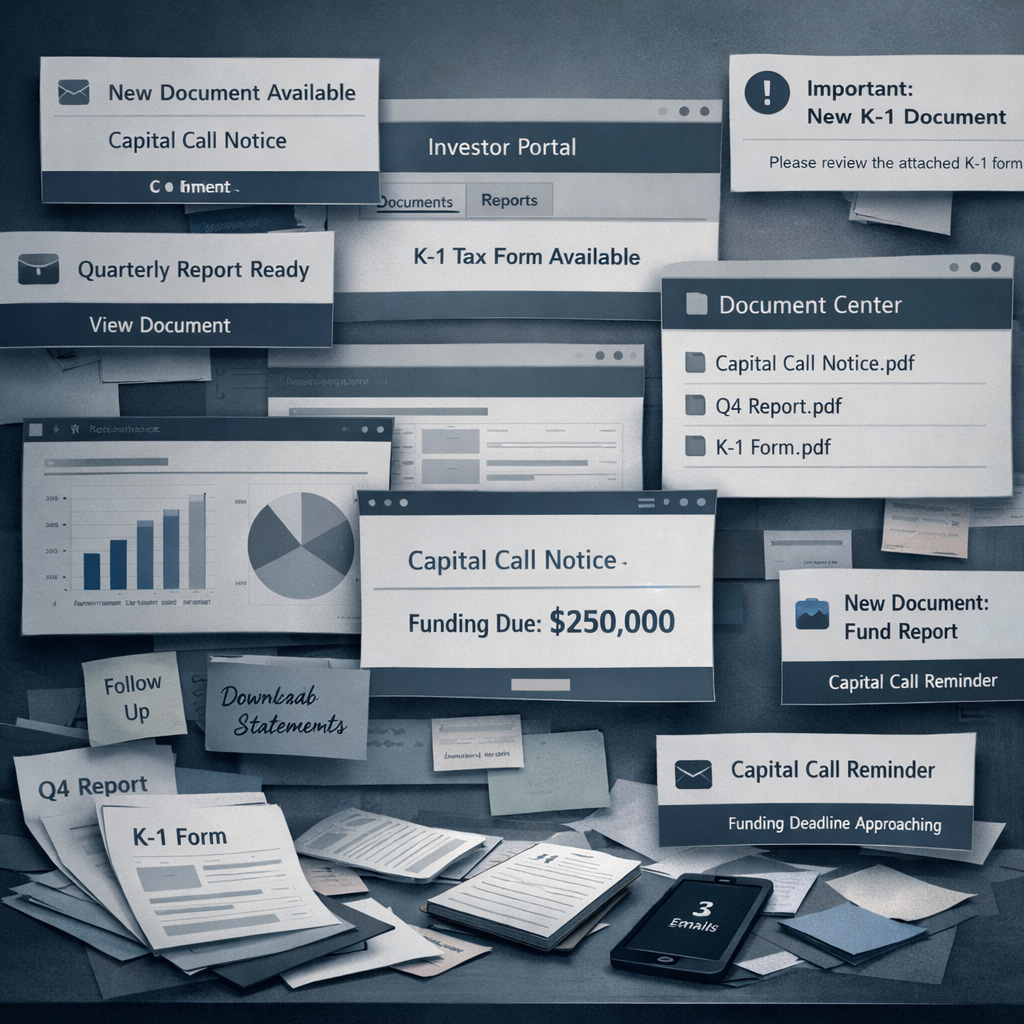

There's a conversation happening at a growing number of LP teams right now. The document collection problem is real and well understood — hours lost to portal logins, missed notifications, credential chaos across dozens of fund relationships. And the instinct, increasingly, is that it should be automatable. The tools exist. AI is everywhere. Surely someone can just build this.

It's a reasonable instinct. But it's also one that the private markets industry has acted on before — with APIs, with robotic process automation, and now with agentic AI — and the results have consistently been more complicated than the initial optimism suggested.

This post traces that history, explains why the problem remains genuinely hard, and sets out what a purpose-built solution needs to handle to be reliable in production.

A brief history of trying to solve this

The API era

The cleanest theoretical solution to GP document collection has always been a direct API integration: LP systems connect programmatically to GP portals, authenticate once, and pull documents as they become available. No browser automation, no email parsing, no credential management overhead.

The problem is that most GP portals have never prioritised rich LP-facing APIs. Portal providers serve GP clients, not LP clients, and GPs have limited commercial incentive to push for LP workflow integrations. The portals that do expose APIs tend to offer limited functionality — authentication, basic document retrieval — without the depth needed to replicate a full document collection workflow. And the long tail of smaller or older portals often have no API at all.

The API dream hasn't entirely died — and as standards like ILPA's data initiatives evolve, the landscape may improve — but for most LP teams today, API coverage across their full GP universe is not a realistic foundation for a document collection solution.

The RPA era

If portals wouldn't expose APIs, the next logical approach was to automate the human workflow directly: use robotic process automation tools to navigate portal UIs, log in, find documents, and download them — just as a person would, but without the person.

RPA tools like UiPath and Automation Anywhere made this accessible to non-engineers, and a number of LP teams and their service providers built portal automation workflows on this foundation. Some of these still run today.

The fundamental weakness of RPA in this context is brittleness. GP portal interfaces change without notice. A button moves, a login flow is updated, a portal migrates to a new platform — and the automation script breaks silently. In a document collection context, silent failure is particularly dangerous: the system appears to be running, but documents are not being collected, and nobody knows until something downstream — a missed capital call, an incomplete quarterly review — surfaces the gap.

Maintaining RPA workflows across a large and evolving GP portal universe requires constant human oversight, regular script updates, and a tolerance for periodic failures that undermines much of the operational value the automation was meant to create.

The agentic AI era

The latest chapter is agentic AI. Where RPA followed rigid scripts, AI agents can reason about what they're seeing, adapt to interface changes, and handle the ambiguity that breaks rule-based automation. The barrier to entry has dropped further: with modern AI coding tools, a technically literate analyst can sketch a working document collection agent in an afternoon.

This is genuinely exciting — and genuinely dangerous to underestimate. Agentic approaches are meaningfully more robust than RPA for this problem. But building a production-grade agentic document collection system that runs reliably across a large GP universe involves a set of challenges that aren't obvious until you're deep in the implementation.

The real challenges of building this yourself

The automation flow itself

The core workflow sounds simple: scan an email notification, identify the relevant portal, retrieve credentials, navigate to the document, download it, and file it correctly. In practice, each of those steps involves branching complexity.

Email notifications arrive in dozens of formats — some with attachments, some with direct download links, some with portal links that require navigation once authenticated, some that bundle multiple documents, some that refer to documents not yet available on the portal. The agent needs to handle all of these reliably, including knowing when to wait and retry rather than failing immediately. Building and testing this flow across the full diversity of GP communication styles is a significant undertaking.

Credential and MFA handling

Storing and managing credentials for dozens of GP portals securely is a non-trivial engineering problem. Credentials need to be encrypted at rest and in transit, with access controls and audit trails. MFA workflows — authenticator apps, SMS codes, hardware tokens — require infrastructure to handle programmatically, which is both technically complex and security-sensitive.

Most homegrown solutions start with credentials in a config file. This works until it doesn't — until someone leaves the team, until a portal rotates its requirements, until a security audit asks how portal access is managed and the answer is a spreadsheet.

Cybersecurity

An automated agent that logs into external portals on behalf of an LP team is a significant attack surface. Portals can serve malicious content. Downloaded files can contain malware. An agent operating without appropriate sandboxing and security controls can inadvertently expose the LP's internal systems to threats introduced via the document retrieval process.

This is not a theoretical risk. Any production document collection system needs to handle downloaded content safely — scanning files, isolating execution environments, and ensuring that the automation pipeline cannot be used as a vector for introducing malicious content into internal systems.

Agent control and restrictions

Agentic AI systems that operate with broad permissions are inherently risky. An agent that can log into portals, navigate interfaces, and download files needs careful constraint — it should do exactly what it is supposed to do and nothing more. Defining those constraints robustly, testing them, and ensuring they hold as the underlying AI models are updated is an ongoing engineering and governance challenge.

The risk of an insufficiently constrained agent in a financial context — one that inadvertently takes an action on a portal it wasn't supposed to take, or that accesses data beyond its intended scope — is not trivial.

Auditability

In a private markets context, knowing what your document collection system has done — and hasn't done — is operationally critical. Which documents were collected, when, and from which source? Which portal access attempts failed, and why? Which notifications were received but not yet processed?

A production system needs a complete, queryable audit trail. Without it, reconciling the document layer against expected deliverables is manual and error-prone — which is precisely the kind of work the automation was supposed to eliminate. Building robust logging and auditability into a homegrown system is rarely prioritised at the outset and expensive to retrofit.

Continuous improvement

A document collection system that works at launch will degrade over time without active investment. Why is a particular portal failing consistently? What is the hit rate across the GP universe, and how is it trending? What happens when the underlying LLM powering the agent is deprecated or replaced with a new version that behaves differently?

These are not one-time engineering problems — they are ongoing operational questions that require monitoring infrastructure, evaluation frameworks, and someone with the expertise and bandwidth to act on them. For most LP teams, this is not a sustainable internal commitment.

Data confidentiality

An agent operating across GP portals on behalf of an LP team is handling some of the most sensitive data in the organisation — fund positions, capital commitments, financial statements, tax documents. Ensuring that this data is handled in accordance with the LP's confidentiality obligations, that it doesn't transit through third-party infrastructure inappropriately, and that the agent's behaviour respects data boundaries requires deliberate design and ongoing governance.

This is particularly relevant as agentic AI systems increasingly rely on cloud-hosted models and infrastructure. The confidentiality implications of routing sensitive fund documents through external AI services need to be understood and managed explicitly.

What purpose-built automation actually delivers

The alternative to building internally is adopting infrastructure that was designed specifically for this problem — built around how private markets document delivery actually works, hardened against the failure modes that homegrown solutions consistently encounter, and maintained continuously as the GP portal landscape and the underlying AI models evolve.

A purpose-built solution handles the full stack: continuous monitoring of GP email notifications across all managers, automated portal access with secure credential and MFA management, document classification and linking by fund, manager, and reporting period, and direct integration with downstream data extraction and reporting workflows. Critically, it comes with the audit trail, security controls, confidentiality architecture, and continuous improvement infrastructure that production use requires — built in from the start, not retrofitted under pressure.

The difference from a homegrown solution is not primarily capability — it is reliability, maintainability, and the governance infrastructure that financial services use requires. A purpose-built system has been tested across the full diversity of GP portal types and communication formats, including the edge cases and legacy systems that break naive automation. It is maintained by a team whose entire focus is keeping it current. And it improves continuously as new portals are onboarded, hit rates are monitored, and the underlying models are evaluated and updated.

How Tamarix approaches this

Tamarix was built from the ground up to handle the full complexity of GP document collection for LP teams — not as a generic automation tool applied to the problem, but as infrastructure designed around how private markets document delivery actually works.

The platform runs always-on monitoring across GP email notifications and LP portals, handling the full diversity of notification formats and portal types across the GP universe. Credential and MFA management is handled securely on behalf of clients, with encryption, access controls, and audit trails built in. Downloaded documents are handled safely, with security controls designed to protect LP internal systems from content introduced via the collection pipeline.

Agent behaviour is constrained and governed — the system does exactly what it is supposed to do, with complete auditability of every action taken and every failure encountered. LP teams have visibility into collection status across their full GP universe: which documents have been collected, which portal access attempts are failing, and why. This monitoring layer feeds a continuous improvement process — hit rates are tracked, failure patterns are diagnosed, and the system is updated as portals evolve and new AI capabilities become available.

Data confidentiality is treated as a first-order concern. The architecture is designed to ensure that sensitive fund documents are handled in accordance with LP confidentiality obligations, with clear controls over how data transits through the system and where it resides.

Because document collection is the foundation of Tamarix's broader LP operating system, captured documents flow directly into data extraction, validation, NAV monitoring, performance reporting, and analytics workflows. The document layer is always complete, always current, and always connected — regardless of how many fund relationships the portfolio contains or how the GP portal landscape evolves.

Ready to see how Tamarix handles document collection across your full GP universe? Schedule a call with our team.

Frequently asked questions

Why haven't GP portals solved this with APIs? Most GP portal providers serve GP clients rather than LP clients, and GPs have limited commercial incentive to push for rich LP-facing API integrations. The portals that do expose APIs tend to offer limited functionality, and the long tail of smaller or legacy portals often have no API at all. For most LP teams, API coverage across their full GP universe is not a realistic foundation for a document collection solution today.

Why did RPA-based GP portal automation fail? RPA automation scripts follow rigid rules that break when portal interfaces change — which they do frequently and without notice. A button moves, a login flow updates, a portal migrates to a new platform, and the script fails silently. In a document collection context, silent failure is particularly dangerous: documents stop being collected, but nothing alerts the LP team until a downstream workflow surfaces the gap.

What makes agentic AI better than RPA for document collection? AI agents can reason about what they're seeing and adapt to interface changes in ways that rule-based RPA cannot. This makes them meaningfully more robust for navigating the diversity of GP portals. However, building a production-grade agentic system involves significant challenges around credential management, cybersecurity, agent control, auditability, confidentiality, and continuous improvement that are not obvious at the outset.

What are the cybersecurity risks of automated GP portal access? An automated agent logging into external portals on behalf of an LP team is a meaningful attack surface. Portals can serve malicious content, and downloaded files can contain malware. Without appropriate sandboxing and security controls, the document collection pipeline can become a vector for introducing threats into LP internal systems. Production document collection infrastructure needs to handle downloaded content safely and isolate execution environments appropriately.

Why is auditability important in automated document collection? In a private markets context, knowing exactly what has been collected, when, and from which source — and which attempts failed and why — is operationally critical. Without a complete audit trail, reconciling the document layer against expected deliverables is manual and error-prone. Audit trails are also increasingly required by compliance frameworks governing LP data handling.

What happens when the AI models powering document collection are updated or deprecated? This is one of the less visible but significant ongoing challenges of homegrown agentic solutions. When a new LLM version is released or an existing model is deprecated, agent behaviour can change in ways that break collection workflows. A purpose-built solution manages model evaluation and updates as part of its continuous improvement process — LP teams don't need to absorb this as an internal engineering responsibility.

How does Tamarix ensure data confidentiality in document collection? Tamarix treats data confidentiality as a first-order architectural concern. The system is designed to ensure that sensitive fund documents are handled in accordance with LP confidentiality obligations, with explicit controls over data transit and residency. Agent behaviour is constrained and governed to ensure it operates only within defined boundaries, and the system does not route sensitive data through external infrastructure inappropriately.

How does Tamarix differ from building a document collection agent internally? Tamarix was designed specifically for private markets document collection — not as a generic automation tool. It has been built and tested across the full diversity of GP portal types, notification formats, and document conventions. It includes production-grade credential management, cybersecurity controls, auditability, and confidentiality architecture from the outset. It is maintained continuously as portals evolve and AI models are updated. And it connects directly to downstream LP workflows — data extraction, monitoring, reporting — in ways that standalone internal tools typically don't.